Popularity is a bane in cybersecurity: Ask ChatGPT, whose popularity has already spawned dozens of malicious copycats since the AI tool’s launch in November 2022.

Cyble Research and Intelligence Labs (CRIL) identified over 50 fake and malicious apps that use the ChatGPT icon to carry out harmful activities. These apps belong to different malware families, such as potentially unwanted programs, adware, spyware, billing fraud, etc.

The use of typosquatted domains and fake social media pages has made it difficult for users to differentiate between legitimate and fake websites.

Moreover, Android malware has been found to be using the name and icon of ChatGPT to mislead users into downloading fake applications, leading to the theft of sensitive information from Android devices.

ChatGPT clones on the internet and social media

Researchers at CRIL have identified several instances where threat actors have taken advantage of ChatGPT’s popularity to distribute malware and carry out other cyber-attacks.

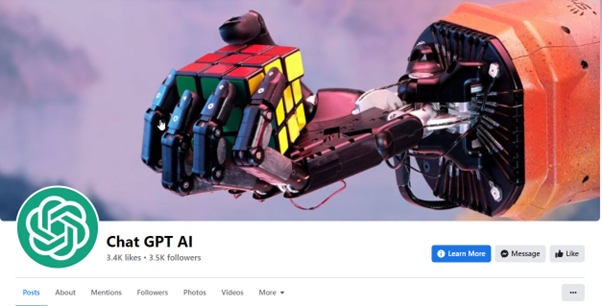

“CRIL has identified an unofficial ChatGPT social media page with a substantial following and likes, which features multiple posts about ChatGPT and other OpenAI tools,” said the report.

“The page seems to be trying to build credibility by including a mix of content, such as videos and other unrelated posts. However, a closer look revealed that some posts on the page contain links that lead users to phishing pages that impersonate ChatGPT.”

“The post features a link that leads to a typosquatted domain, masquerading as the official website of ChatGPT. This can mislead users into thinking they are accessing ChatGPT’s official website and induce them to try ChatGPT for PC,” said the report.

The CRIL investigation uncovered 50 fake and malicious apps that use the ChatGPT name. They have also discovered that several typosquatted domains related to OpenAI and ChatGPT are being utilized for phishing attacks, which distribute notorious malware families, including Lumma Stealer, Aurora Stealer, and clipper malware.

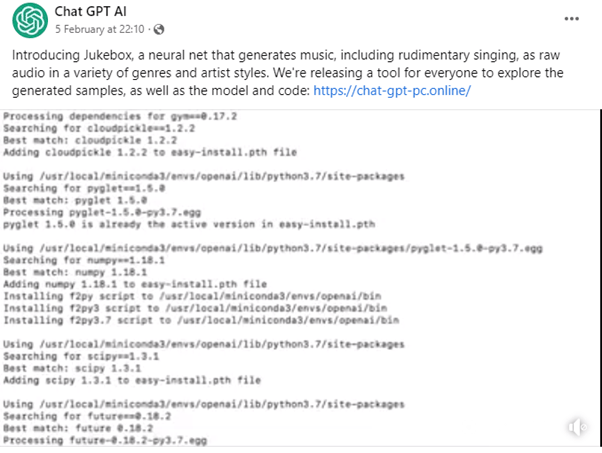

On the social media page, there is another post that talks about Jukebox, an AI-powered tool that improves music and audio creation. However, the post contains a link that directs to a typosquatted domain called “hxxps://chat-gpt-pc.online”.

“Both typosquatted domains ultimately lead to a counterfeit OpenAI website that appears to be the genuine official website. This fake website presents users with a “DOWNLOAD FOR WINDOWS” button, which, when clicked, downloads potentially harmful executable files.”

ChatGPT clones, financial fraud, and android malware

Threat actors not only use ChatGPT and OpenAI for hosting stealers and malware but also for committing financial fraud. One common strategy involves the creation of phony ChatGPT-related payment pages that trick victims into providing their money and credit card information.

According to CRIL, there are more than 50 malicious apps that utilize the ChatGPT icon to conduct harmful activities. These apps belong to various malware families, including potentially unwanted programs, adware, spyware, and billing fraud.

Although the malware employs the name and icon of ChatGPT, it lacks AI functionality. The SMS fraud family is responsible for the malware, which performs billing fraud.

This particular malware conducts billing fraud by checking specific network operators and subscribing to premium services without user knowledge. The malware sends an SMS to the premium number “+4761597”.

“We have identified an additional five SMS fraud applications pretending to be ChatGPT and are engaged in billing fraud, resulting in victims losing their money. These fraudulent applications are designed to drain the wallets of unsuspecting individuals,” said the report.

ChatGPT, malicious use, and failing controls

The Cyber Express reported earlier about ChatGPT users bypassing restrictions on the platform to create malicious content, from phishing letters to malware.

“ChatGPT’s increased popularity also carries increased risk. For example, Twitter is replete with examples of malicious code or dialogues generated by ChatGPT. Although OpenAI has invested tremendous effort into stopping abuse of its AI, it can still be used to produce dangerous code,” noted a CheckPoint analysis of the situation.

The Cyber Express reported in January about a fake ChatGPT app has emerged on the Apple App Store — pretending to be ChatGPT while deploying a paid subscription to the free version of the chatbot.

The alleged fake ChatGPT app is charging users as much as $7.99 for weekly subscriptions to a service that already exists for free.